Pentagon, Anthropic, and the promise of Military AI

Our goal with The Daily Brief is to simplify the biggest stories in the Indian markets and help you understand what they mean. We won’t just tell you what happened, we’ll tell you why and how too. We do this show in both formats: video and audio. This piece curates the stories that we talk about.

You can listen to the podcast on Spotify, Apple Podcasts, or wherever you get your podcasts and watch the videos on YouTube. You can also watch The Daily Brief in Hindi.

In today’s edition of The Daily Brief:

The Pentagon-Anthropic divorce

The world just keeps borrowing; is that a good thing?

The Pentagon-Anthropic divorce

Two years ago, Anthropic’s Claude became the first large language model (LLM) to operate inside the Pentagon’s classified networks. The government clearly wanted to embed it into its systems. It was fast-tracked through layers of security clearance that normally take years to navigate, and had quickly found itself working across the breadth of America’s government.

Yet, last week, the Pentagon designated Anthropic a “supply chain risk to national security“ — a provision that it had once used to hamstring China’s Huawei. Just as quickly as it found itself in government, Anthropic was asked to leave.

Frankly, we started this story completely confused. Why was Claude in the Pentagon at all? Why was the American military pressing a chatbot into service? Without answers to these questions, it was hard to understand why this was a big deal.

As we found out, Claude sits at the end of a long journey of military innovation. Knowing that history makes this fallout even stranger.

A history of US intelligence

Well before AI was ever a thing, the US military has used intelligent systems for a long time.

This journey began with radar, during the Cold War, when the US lived in constant fear of a nuclear attack. To track any incoming bombers, and later, missiles, the United States connected hundreds of radars to IBM computers spread across 23 locations. Collectively, this became the Semi-Automatic Ground Environment (SAGE) defence system.

SAGE was a massive push to the world of computing. The computers assembled for it, at 275 tons, were the largest ever built. And for the first time, these were all connected together through telephone lines — a direct precursor to the internet.

The largest computer ever built

With time, SAGE became a rule-based “expert” system. Its computers would ingest large amounts of data and match patterns. What they did was pre-programmed by humans. But within very narrow domains, it let them automatically detect threats and assess risks. They even took over larger tasks like guiding missiles.

But if they ever faced new situations they weren’t coded for, they couldn’t adapt.

The problem of too much data

All these early automation successes involved tasks that required a lot of computation, which took humans a lot of time. But, ultimately, they only made simple decisions. Actual judgement calls were left to humans.

But slowly, the sheer number of judgements the military had to make were growing. After 9/11, the US started collecting massive amounts of data. For instance, between 2003-2009, the number of drone sorties had grown by ten-fold. The feed from a single predator drone required 19 on-ground analysts to parse through what was happening. They didn’t have enough people for the task. Thousands of hours of surveillance footage sat unwatched, while more than one-fourth of on-ground coordinators reported being burnt out by the constant work. And the data pipelines only kept increasing.

The military tried cracking this problem by making computers synthesize data. Enter Palantir.

Palantir had seed funding from the CIA. It used that to build its “Gotham” platform, which took the algorithms used to detect credit card fraud, and applied them to military data very effectively. It could take raw datastreams and turn it into a collection of objects — people, places, events, things. More importantly, it could connect very different datasets, and map non-obvious relationships between them. This was a machine that could find needles in haystacks.

For instance, plugging together two very different databases, the Pentagon realised that in Iraq, militants were tinkering with garage door clickers, turning them into remote detonators for bombs. Before this, you could only get such information from someone who had seen it first-hand.

That said, drawing connections is different from analysis. Gotham could not explain or reason through the connections it found. It could not generate hypotheses, or guess at the intent of someone else. In other words, it had a “last mile” problem. It still needed a highly trained analyst, who was comfortable with the platform. They would have to navigate its interfaces, and understand what it was saying. Even though the US could observe a lot, this bottleneck stopped it from reacting to everything it observed.

Real artificial intelligence

To close this gap, in 2017, the US launched Project Maven.

At first, Google was to provide the technology for the project. But it pulled out because its employees protested the effort; Palantir took over.

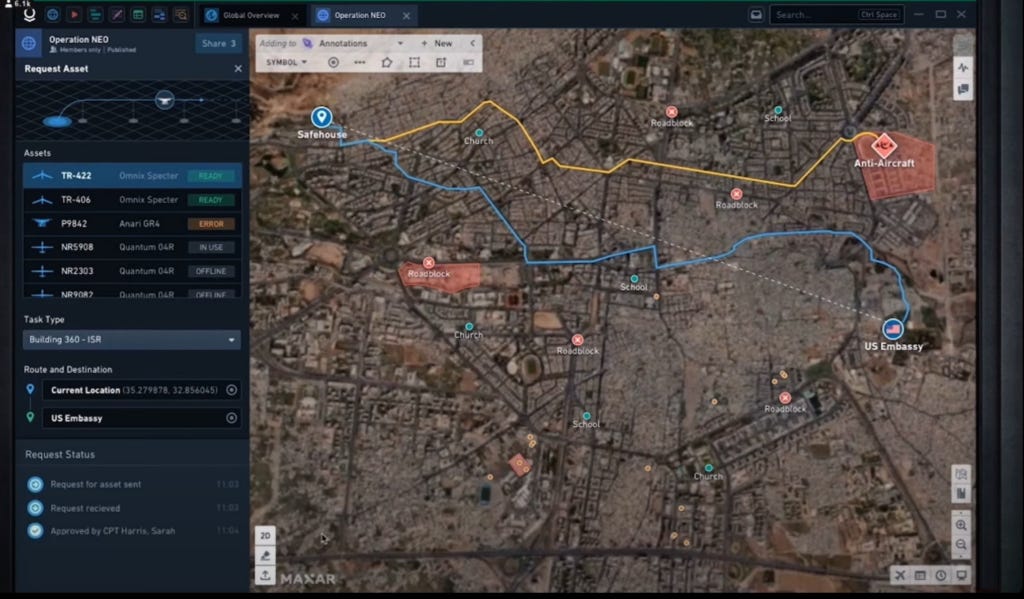

Project Maven used computer vision to process its data. Through deep learning, the model began making sense of those datafeeds. It learnt to understand the layout of a battlefield; what enemy property was worth attacking, and what one ought to avoid, like hospitals. It could spot new constructions, or key changes on the map.

AI had officially come to warfare. Commanders quickly realised how useful AI could be, much like most of us did last year. By 2024, Maven was being used across the world, from Ukraine to Yemen.

But there was a new layer worth adding: Maven would benefit from a large language model.

Sometimes, the military needed to process hundreds of thousands of pages of text coming in live, like situation or intelligence reports. Analysts, too, needed the ability to ask questions in plain English, instead of learning how to work with every single one of the platform’s interfaces. And you needed a system that could stitch datastreams into intelligence reports, which humans could understand.

That is how Claude found its way in. In November 2024, Claude 3 and 3.5 were added to Palantir’s systems. Those systems still did the heavy-lifting; they linked all the data and operational systems together. But Claude was now added to the mix. It was the first AI model on Pentagon networks.

Marriage / divorce

The moment it entered US defence, Claude’s footprint grew rapidly.

Usually, there are many layers of bureaucracy one must cross to enter highly classified defence networks. Claude was fast-tracked through them. Over just eight months, in quick succession, it was approved for general government use, and then was given the thumbs-up to become a defence contractor. Clearly, the government wanted it there fast.

For these new projects, Anthropic created Claude Gov — a special version of its model that worked safely on classified systems, and was particularly good for military intelligence tasks. By late 2025, Claude was completely embedded into Maven. Now, the platform could produce intelligence analysis, from start-to-end, with no humans involved.

But there was an issue. Anthropic had a tight set of guardrails on what its models would or would not do. They would not be allowed to make weapons, for instance, or surveil others. But that was exactly the world it was entering.

The exceptions

In June 2024, Anthropic announced that it would modify those guardrails. It would make exceptions for the government. For instance, it would let the government use its models for “foreign intelligence operations.” There were some redlines it stuck to, though — the “design or use of weapons” or “cyber operations” was still out of bounds.

But slowly, its ambitions were growing.

Midway through last year, it was one of the four AI labs that entered exploratory agreements with the US Department of Defence, for a maximum of $200 million. Under this, it would talk to the Department, try understanding their needs across areas like intelligence or warfighting, build “agentic” prototypes, and see what worked and could be scaled. Shortly thereafter, it decided to offer itself to all branches of the US Government for a single dollar.

As these entanglements increased, Anthropic had to offer more concessions. In contract negotiations last December, Anthropic dropped its reservations on cyber or missile defence. Theoretically, you could now tell Claude to fire missiles, and it would comply.

There were two exemptions Anthropic wasn’t willing to budge on, however: domestic surveillance, and creating fully autonomous weapons. But the Pentagon wasn’t willing to listen.

“All lawful purposes”

As this year began, the US raided Venezuela and captured its head-of-state, Nicholas Maduro. News reports claimed that Claude had been used for this. If they were true, this would be a major escalation of LLMs in war. And Anthropic had no idea.

So, during a check-in, Anthropic asked Palantir about the operation, and whether its models were put to use. To the Pentagon, this seemed like a threat. If Anthropic didn’t like the answer it got, could its models refuse a request?

And that’s when things went downhill, fast.

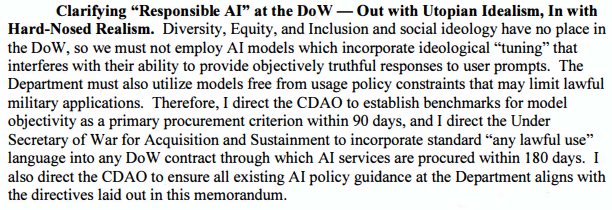

On January 9, Pete Hegseth, the American Defence Secretary, released a memorandum on AI, which laid a theoretical groundwork for America dominating military AI. Hidden in there was a jab at Anthropic. The memorandum claimed that there was no space for “utopian idealism” in the military. Instead, in the next six months, all its AI contracts would be renegotiated, until the US military could use those models for “any lawful use”.

Hegseth didn’t wait that long, anyway. On February 24, Anthropic was given less than four days: it had to sign away its model “for all lawful purposes” by 5 PM that Friday — else, the Pentagon would invoke a barrage of laws.

But Anthropic refused.

Here, things get murky. The Pentagon claims that Anthropic was claiming veto powers over the American government. In their telling, if there’s a missile heading towards the US, for instance, you don’t want to find out that Claude’s guardrails don’t let you respond. But what “lawful use” was it being denied?

From both Anthropic’s public stance and its leaked internal documents, it seems like their biggest fear is that its models will be used to surveil Americans — and the US government has lots of data on its citizens. It has already found legal loopholes to do so. But so far, that data was a fragmented mess — until LLMs came around with the ability to construct, from that messy data, a scarily-accurate portrait of someone’s personal life.

Now, Anthropic seems fine with processing data from foreign countries — hence the words “mass domestic surveillance”. If we understand this right, our digital trail is already vulnerable.

The fallout

The Department of Defence made two slightly contradictory legal threats. One, it would use the Defence Production Act, which lets the US government to forcefully procure emergency war-time supplies. In this argument, Anthropic was indispensable to national security.

At the same time, it would designate Anthropic as a “supply chain risk” — a designation reserved for companies from adversary nations, like Russia’s Kaspersky, or China’s Huawei. Here, Anthropic was a threat to national security. With this designation, no vendor to the US Department of Defence could use Anthropic for any national security purposes.

In fact, the government claimed even wider powers: that no vendor to the US Department of Defence could work with Anthropic in any manner, whether for Pentagon contracts or otherwise. That would effectively blacklist Anthropic from America’s entire tech ecosystem, since most major tech companies — including Anthropic investors like Amazon and NVIDIA — would be blocked from working with them.

So far, the government hasn’t pressed the first claim. But last week, it formally designated Anthropic a supply chain risk. Meanwhile, the White House took things even further, instructing the entire US government to break ties with Anthropic, whether they had anything to do with defence or not.

Anthropic has just sued the government over this. This is where things stand.

Making an example

Ever since the second World War, America’s military didn’t know how to make sense of all the information it had. In 2024, those capabilities had finally arrived. America finally had a way of understanding what its sprawling surveillance networks told it.

Perhaps that power, eventually, was too seductive: because the US Government would harbour no restriction to it — not even the privacy of its own citizens.

To our eyes, this reaction was meant to turn Anthropic into an example. The larger story — of LLMs becoming a tool of war — isn’t going anywhere. Within days of the Anthropic fiasco, OpenAI and xAI had already cut deals with the government. The company that replaces Anthropic, presumably, is the one that asks the fewest questions.

The world just keeps borrowing; is that a good thing?

The OECD, the club of 38 wealthy, developed nations, publishes a global debt report every year that tracks developments in the world’s bond markets. How much are governments and companies borrowing? At what cost? And who, exactly, is lending to them?

We went through the entire 157-page thing. It’s incredibly dense and technical — not least because it drills down into the nitty-gritties of bonds, which is one of the hardest things to talk about. The jargon is thick, the mechanics are unintuitive, and a 157-page OECD report doesn’t make it any easier.

We’re going to try to break down the most important ideas here, but that means we obviously can’t cover everything the report says. We genuinely recommend reading the report itself to get the full picture.

Now, let’s go over some basics first.

When a government spends more than it earns from taxes — which is something that happens all too often — it borrows the difference by issuing bonds. A bond is really just an IOU: the government says, “give me ₹100 today, I’ll pay you 4% interest every year, and I’ll return your ₹100 in ten years“. An institution like a bank, a pension fund, or a hedge fund hands over the money, and the government spends it.

Companies borrow similarly, too. But corporate bonds are perceived as being riskier than government bonds, because unlike a government, companies go bankrupt all the time. So, they pay a higher interest rate. The gap between what a corporate bond pays and what an equivalent government bond pays is called the credit spread. And it tells you how risky the market thinks that company is.

The global bond market, which includes every government and corporate bond currently outstanding, adds up to a whopping $109 trillion. It is, by a wide margin, the single most important financial market on the planet, because government bond yields set the baseline cost of borrowing for everyone. When government bond yields rise, everything — from mortgages, to business loans, to consumer credit — gets more expensive.

The loans are not enough

That’s the context in which the OECD report operates.

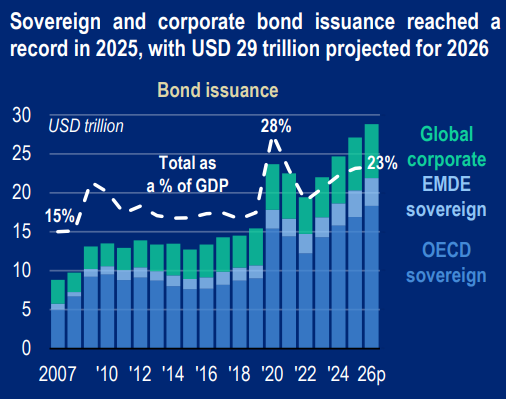

The 38 OECD governments collectively borrowed $17 trillion in 2025. Companies in OECD regions globally borrowed another $13.7 trillion. Together, that’s over $30 trillion flowing through debt markets in a single year, projected to rise further in 2026.

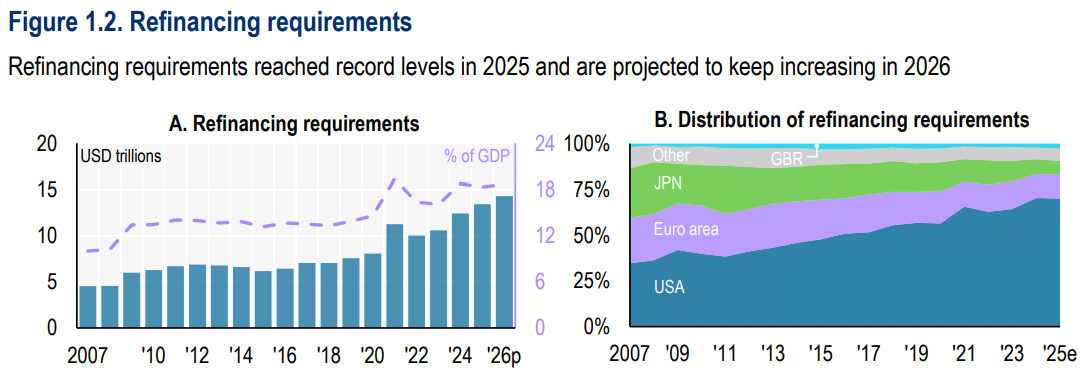

Interestingly, the vast majority of government borrowing isn’t new spending — rather, it was refinancing old loans that came due with new loans or bond issues. About $13.5 trillion — nearly 80% — was just this sort of refinancing. Think of it like a homeowner whose five-year loan is expiring, and they don’t have the cash to pay it off. So they take a new loan to pay off the old one.

The problem is that the new loan costs a lot more.

Between 2015-2021, interest rates across the developed world were extraordinarily low — in some countries, actually negative. Much of the borrowing during this period wasn’t because governments were taking advantage of cheap rates — it was because they had to, especially during COVID. But the effect was the same: a huge stock of debt got locked in at near-zero costs. Then inflation surged in 2022, central banks raised rates, and suddenly new bonds had to offer 4-5%.

Now, the old cheap bonds themselves didn’t change. If you issued at 0.7%, you kept paying 0.7% until it matured. But every year, some of those bonds came due and needed to be replaced at much higher rates. One-third of all OECD fixed-rate debt matures between 2026-2028, and current market yields are about 2% higher than what those maturing bonds were paying. Across trillions of dollars worth of bonds in the market, that gap translates into hundreds of billions in additional yearly interest costs.

There’s another nuance to this: inflation used to help with this rolling over of debt. That sounds weird, but let us explain.

The OECD measures the sustainability of a country’s debt with the debt-to-GDP ratio — or the total debt divided by the size of the economy. GDP is measured in nominal terms, meaning that it doesn’t account for the effect of rising prices. So, when inflation is high, GDP swells simply because everything costs more, and not necessarily because the economy is producing more. That makes the ratio look better, even if the actual debt hasn’t changed.

From 2021 to 2025, inflation was high enough that this effect more than offset the rising interest bill. But now inflation has come back down, while the interest bill keeps growing. In 2026, for the first time, the report projects that while interest payments will push debt up, the boost that inflation gave to GDP is fading. The net effect is an increase in the debt-to-GDP ratio, meaning that debt has become more unsustainable.

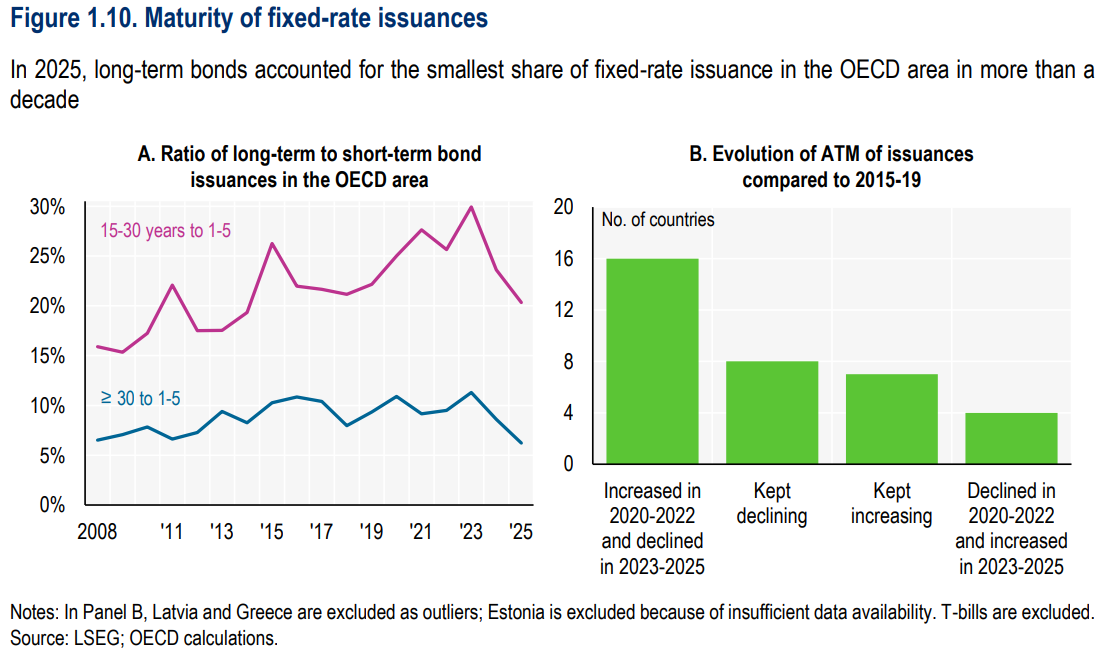

How have governments responded? Well, they’re borrowing shorter. Treasury bills, which mature in under a year, now account for 48% of total OECD borrowing. The share of issuance with maturities over 10 years hit its lowest since 2009.

The logic makes intuitive sense, too. Why lock in expensive 30-year debt when you can borrow more cheaply for a few months? But on the flip side, you have to come back to the market much sooner. If the spot rates also turn out to be expensive, then refinancing in just a few months will be painful compared to locking in rates well in advance.

During the 2008 financial crisis, governments also ramped up short-term borrowing, then reverted to normal within two years. After COVID, the same spike happened. But this time, the short-term share didn’t normalise. It started climbing again in 2023 and hasn’t come back down.

Are tight credit spreads telling the truth?

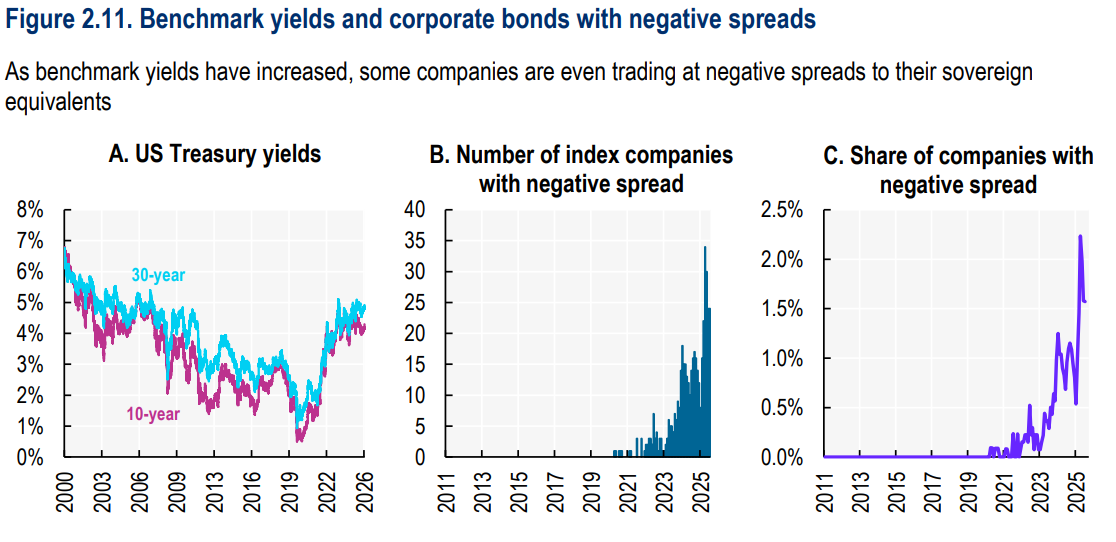

On the corporate side, the puzzle is different. Credit spreads are near all-time lows, which looks like the market saying corporate credit risk has never been lower. The report argues that’s misleading.

Part of the tightness reflects genuine health: corporate cash levels are solid, earnings forecasts are strong, and default rates are falling. But a big chunk of the compression may have nothing to do with whether companies are actually safer.

Why so? Well, a credit spread is the gap between what a company pays and what the government pays. It’s a relative measure. So if government borrowing costs go up, the baseline shifts, and the gap shrinks, even if nothing about the companies has changed. And while still rare, strikingly, some companies even trade at negative spreads to their own governments.

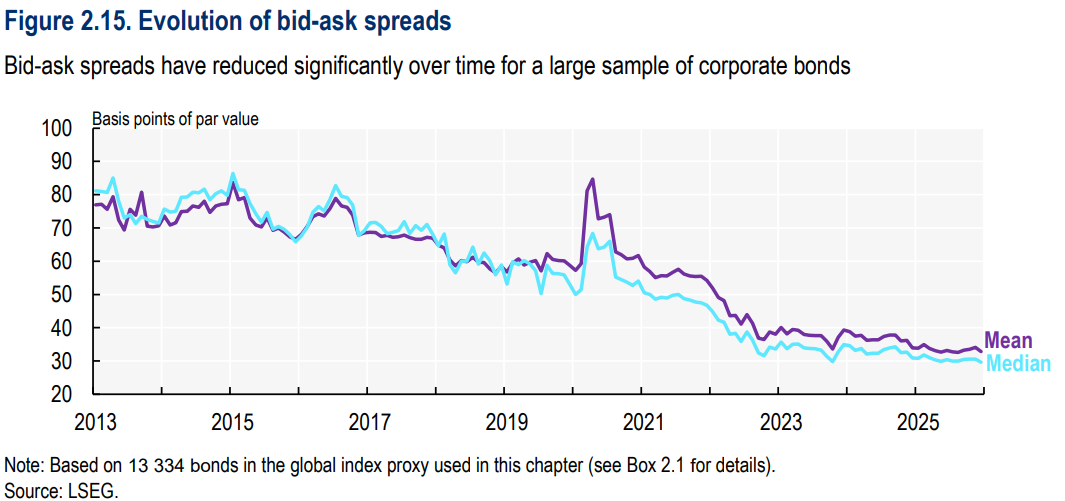

This phenomenon is also related to how corporate bonds are traded. Since 2008, electronic platforms, ETFs, and algorithmic trading have transformed a market that used to be slow, opaque, and phone-based. Bid-ask spreads fell from 77 basis points in 2013 to 33 in 2025. The report estimates that about a third of the credit spread compression since 2013 came purely from improved liquidity in the market. Investors used to demand extra yield because corporate bonds were hard to sell, but now, that’s less true.

Layered on top of this is the AI story. Nine major tech firms raised $122 billion from bond markets in 2025, with projected capex of $4.1 trillion between 2026-2030. Total capex by all non-financial US companies was about $3 trillion last year. Can bond markets absorb AI-related borrowing alongside record government issuance? There’s no definitive answer to that question yet, but three of our older stories may help you think about it deeper.

The buyers have changed

The report also dove into who is buying all this debt, and how the mix of the investor base has changed.

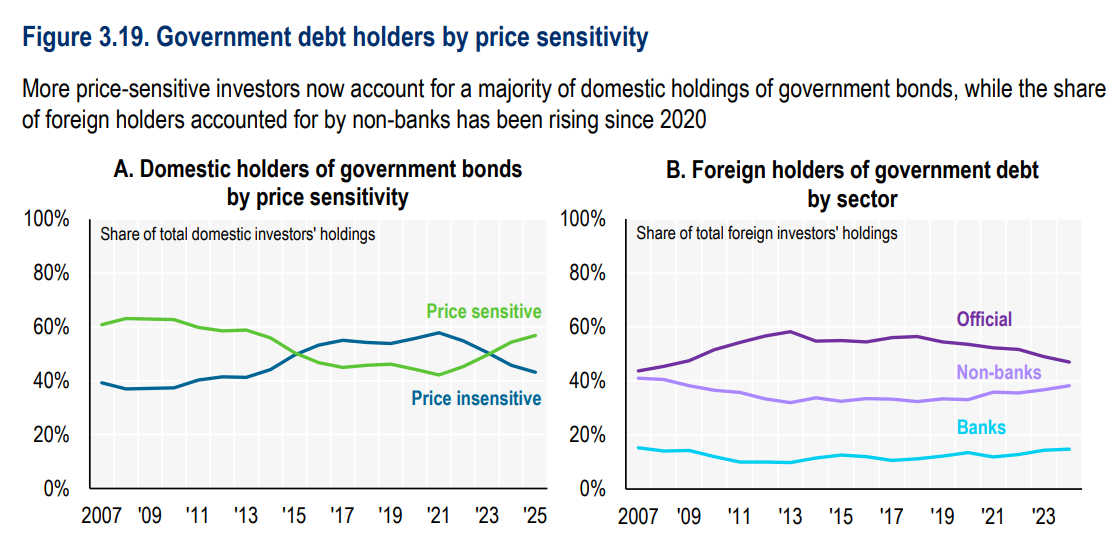

Between 2015-2021, central banks were the dominant buyers — the US Federal Reserve buying Treasuries, the ECB buying Euro area bonds, the Bank of Japan buying JGBs, and so on. This is what’s also known as quantitative easing, where central banks create money to buy their own government’s debt, pushing yields down across the economy.

Central banks peaked at 22% of the combined bond market in 2022. But since then, they’ve stepped back to 15%. Basically, the biggest, most patient, most price-insensitive buyer is withdrawing.

Demand from pension funds has also gone down. That’s because of the shift from defined-benefit to defined-contribution pension schemes — most dramatically in the Netherlands and the UK. Defined-benefit funds have to buy long-dated government bonds to match future payout obligations, but defined-contribution funds don’t.

So if central banks are buying less, and pension funds are buying less, but governments are issuing more than ever — who’s picking up the slack?

Enter hedge funds, mutual funds, ETFs, and retail investors. Central banks and defined-benefit pensions bought because they had to. The new buyers are in it because they want to be — for profit, of course. Hedge funds now account for nearly a third of US Treasury secondary market trading. On platforms like Tradeweb, they make up a majority of sovereign bond trading in the UK and Europe. More than half of surveyed government debt offices say hedge funds are now the marginal buyers of their bonds.

On a calm day, this works well. But this also means they’ll leave when conditions change or they don’t see enough upside. Hedge funds use a lot of borrowed money to make their trades. If prices fall, their lenders can demand more cash or force them to sell — pushing prices down further, triggering more forced selling. It feeds on itself. In fact, this is just what happened in April 2025 when US tariff announcements caused a Treasury sell-off, amplified by hedge funds unwinding positions.

What should I make of all of this?

How do we make sense of all this? Well, for one, the absence of a crisis doesn’t mean there’s no or little risk.

Bond auctions may be going smoothly and volatility may be moderated. But that resilience rests on credible monetary policy, fiscal discipline, and institutional trust — foundations increasingly under pressure. The buyer base has shifted in ways that make markets more liquid on good days, but more brittle on bad ones.

And the bad day, if and when it comes, could arrive into a market carrying more debt, at higher cost, with shorter maturities, and held by people less inclined to stick around.

Tidbits

Dixon Technologies jumps after JV approval with HKC

Shares of Dixon Technologies rose about 13% after the government approved its joint venture with HKC Overseas to manufacture display modules in India. The JV will produce LCD and TFT-LCD modules used in smartphones, laptops and TVs. Analysts say the move could boost Dixon’s margins as the company increases local component manufacturing.

Source: Business StandardIndia declines IEA call to release strategic oil reserves

India has refused the International Energy Agency’s request to release oil from its strategic reserves despite rising global crude prices. Officials said the reserves are meant for supply disruptions, not price management. India currently holds about 5.33 million tonnes of reserves, which are around 80% full.

Source: The Economic TimesIndonesia to acquire BrahMos supersonic missiles

Indonesia has reached an initial agreement with India to acquire BrahMos supersonic cruise missiles, becoming the second country after the Philippines to buy the system. The deal is part of Indonesia’s effort to modernise its defence capabilities, especially in maritime security. The final contract value has not yet been disclosed.

Source: The Hindu BusinessLine

- This edition of the newsletter was written by Pranav and Krishna.

What we’re reading

Our team at Markets is always reading, often much more than what might be considered healthy. So, we thought it would be nice to have an outlet to put out what we’re reading that isn’t part of our normal cycle of content.

So we’re kickstarting “What We’re Reading”, where every weekend, our team outlines the interesting things we’ve read in the past week. This will include articles and even books that really gave us food for thought.

We’re now on WhatsApp!

We’ve started a WhatsApp channel for The Daily Brief where we’ll share interesting soundbites from concalls, articles, and everything else we come across throughout the day. You’ll also get notified the moment a new video or article drops, so you can read or watch it right away. Here’s the link.

See you there!

Thank you for reading. Do share this with your friends and make them as smart as you are 😉

Hi! Good article.

Very interested to know if you'll are also using some LLM to read-and-understand some data. Like the 157-page global debt report?